NekoImageGallery

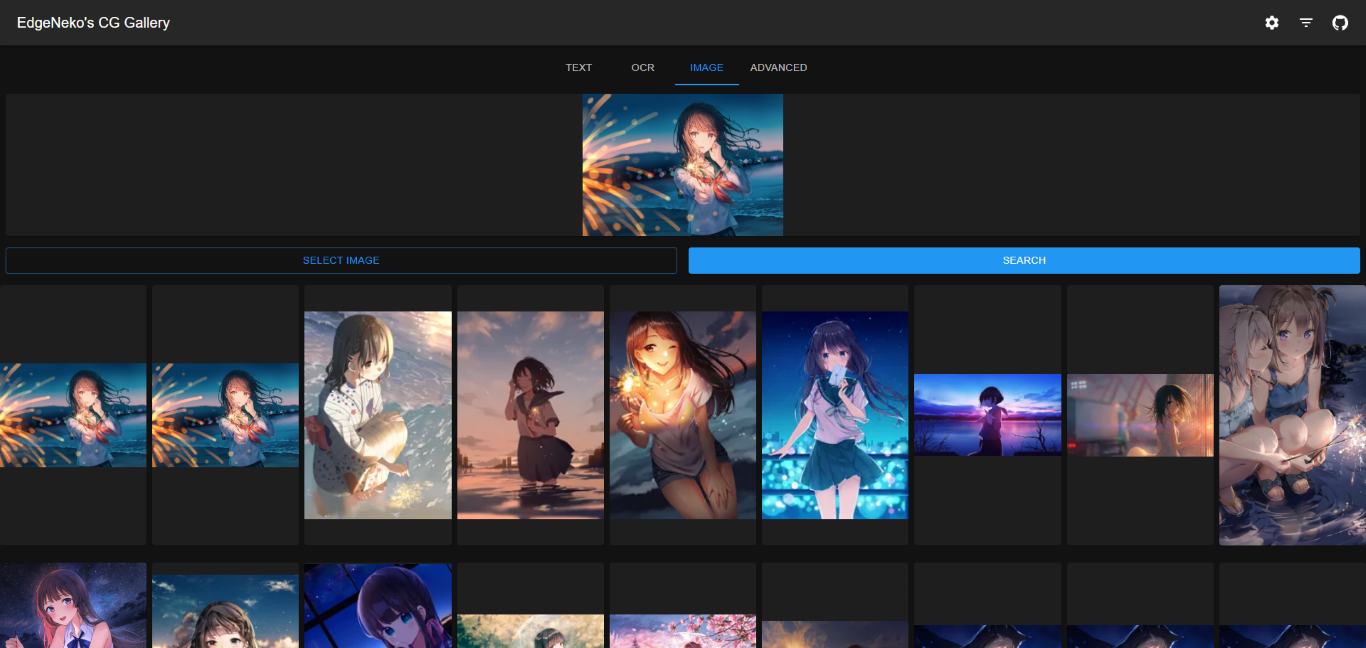

An online AI image search engine based on the Clip model and Qdrant vector database. Supports keyword search and similar image search.

✨ Features

- Use the Clip model to generate 768-dimensional vectors for each image as the basis for search. No need for manual annotation or classification, unlimited classification categories.

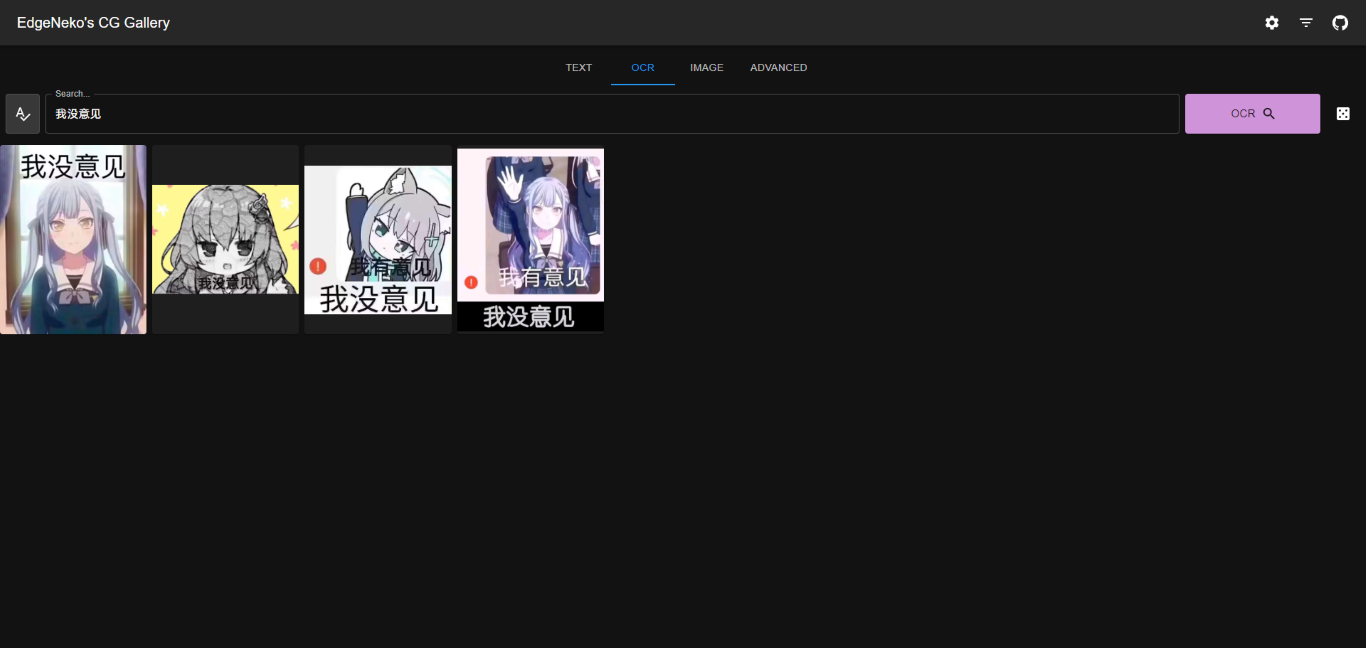

- OCR Text search is supported, use PaddleOCR to extract text from images and use BERT to generate text vectors for search.

- Use Qdrant vector database for efficient vector search.

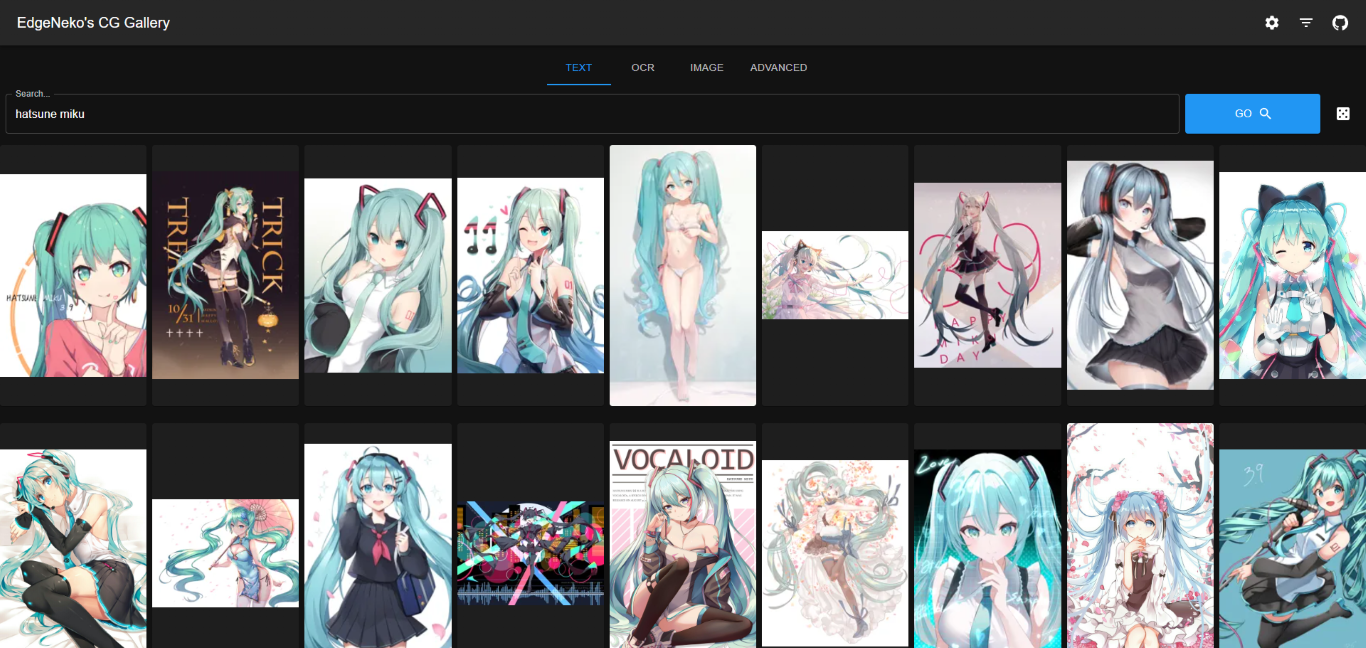

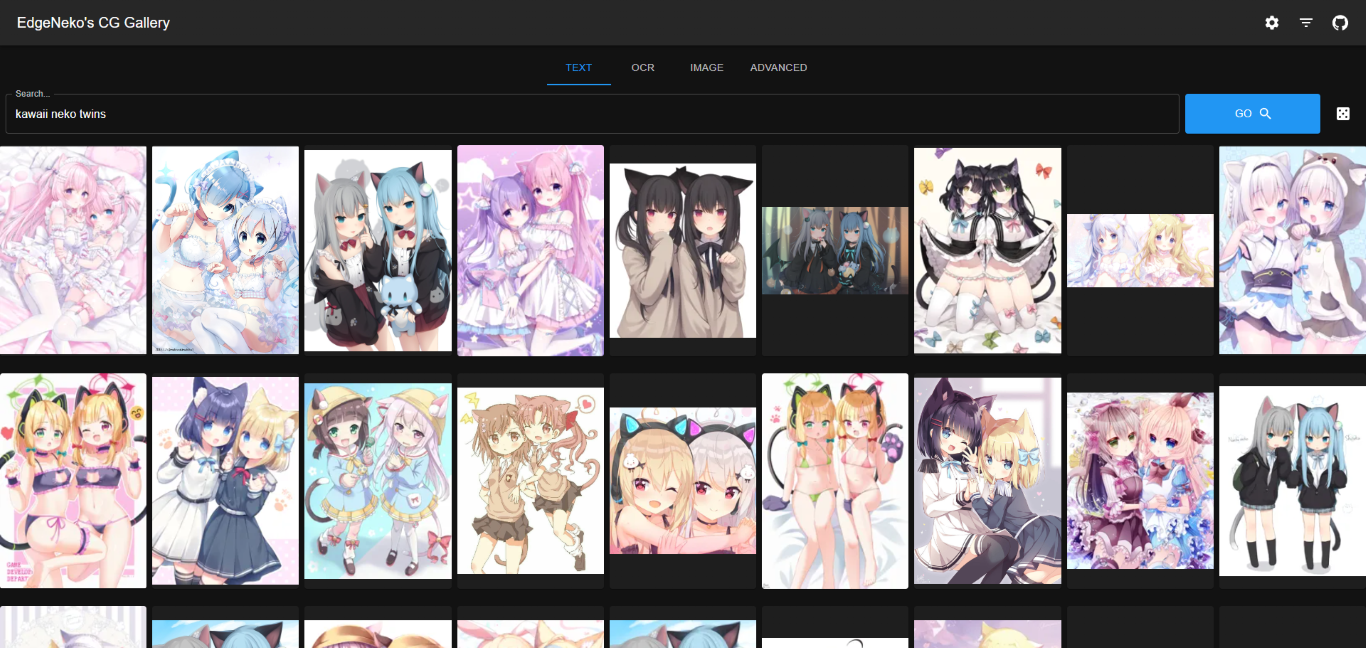

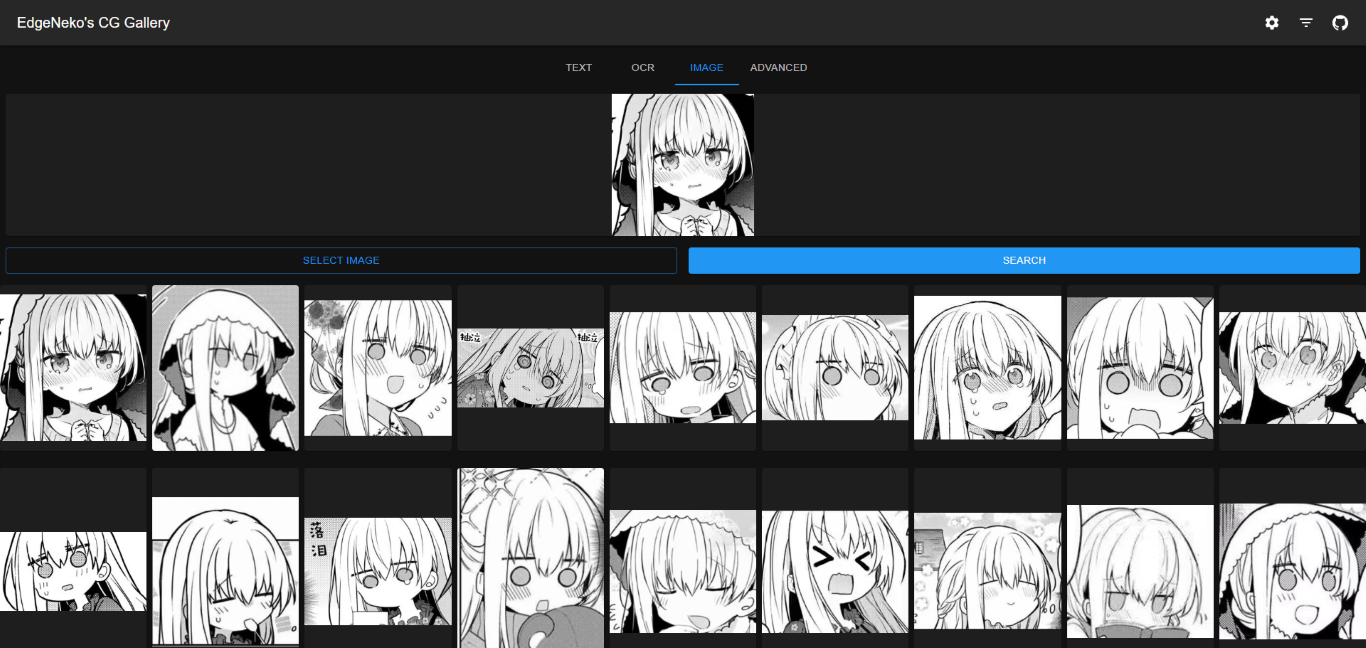

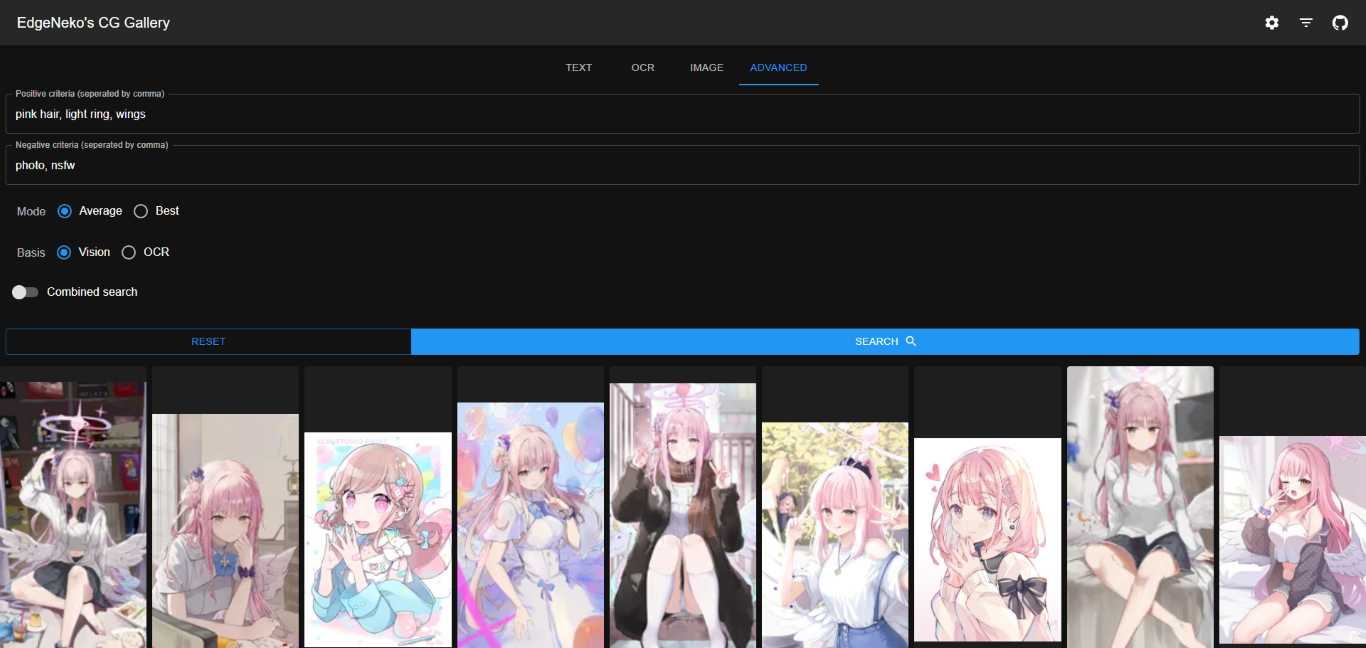

📷Screenshots

The above screenshots may contain copyrighted images from different artists, please do not use them for other purposes.

✈️ Deployment

🖥️ Local Deployment

Choose a metadata storage method

Qdrant Database (Recommended)

In most cases, we recommend using the Qdrant database to store metadata. The Qdrant database provides efficient retrieval performance, flexible scalability, and better data security.

Please deploy the Qdrant database according to the Qdrant documentation. It is recommended to use Docker for deployment.

If you don’t want to deploy Qdrant yourself, you can use the online service provided by Qdrant.

Local File Storage

Local file storage directly stores image metadata (including feature vectors, etc.) in a local SQLite database. It is only recommended for small-scale deployments or development deployments.

Local file storage does not require an additional database deployment process, but has the following disadvantages:

- Local storage does not index and optimize vectors, so the time complexity of all searches is

O(n). Therefore, if the data scale is large, the performance of search and indexing will decrease. - Using local file storage will make NekoImageGallery stateful, so it will lose horizontal scalability.

- When you want to migrate to Qdrant database for storage, the indexed metadata may be difficult to migrate directly.

Deploy NekoImageGallery

- Clone the project directory to your own PC or server.

- It is highly recommended to install the dependencies required for this project in a Python venv virtual environment.

Run the following command:

python -m venv .venv . .venv/bin/activate - Install PyTorch. Follow the PyTorch documentation to install the torch

version suitable for your system using pip.

If you want to use CUDA acceleration for inference, be sure to install a CUDA-supported PyTorch version in this step. After installation, you can use

torch.cuda.is_available()to confirm whether CUDA is available. - Install other dependencies required for this project:

pip install -r requirements.txt - Modify the project configuration file inside

config/, you can editdefault.envdirectly, but it’s recommended to create a new file namedlocal.envand override the configuration indefault.env. - Initialize the Qdrant database by running the following command:

python main.py --init-databaseThis operation will create a collection in the Qdrant database with the same name as

config.QDRANT_COLLto store image vectors. - (Optional) In development deployment and small-scale deployment, you can use the built-in static file indexing and

service functions of this application. Use the following command to index your local image directory:

python main.py --local-index <path-to-your-image-directory>This operation will copy all image files in the

<path-to-your-image-directory>directory to theconfig.STATIC_FILE_PATHdirectory (default is./static) and write the image information to the Qdrant database.Then run the following command to generate thumbnails for all images in the static directory:

python main.py --local-create-thumbnailIf you want to deploy on a large scale, you can use OSS storage services like

MinIOto store image files in OSS and then write the image information to the Qdrant database. - Run this application:

python main.pyYou can use

--hostto specify the IP address you want to bind to (default is 0.0.0.0) and--portto specify the port you want to bind to (default is 8000). - (Optional) Deploy the front-end application: NekoImageGallery.App is a simple web front-end application for this project. If you want to deploy it, please refer to its deployment documentation.

🐋 Docker Deployment

About docker images

NekoImageGallery’s docker image are built and released on Docker Hub, including serval variants:

Where <version> is the version number or version alias of NekoImageGallery, as follows:

| Version | Description |

|---|---|

latest |

The latest stable version of NekoImageGallery |

v0.1.0 |

The specific version number (correspond to Git tags) |

edge |

The latest development version of NekoImageGallery, may contain unstable features and breaking changes |

Prepare nvidia-container-runtime (CUDA users only)

If you want to use CUDA acceleration, you need to install nvidia-container-runtime on your system. Please refer to

the official documentation for installation.

Related Document:

- https://docs.docker.com/config/containers/resource_constraints/#gpu

- https://docs.nvidia.com/datacenter/cloud-native/container-toolkit/install-guide.html#docker

- https://nvidia.github.io/nvidia-container-runtime/

Run the server

- Download the

docker-compose.ymlfile from repository.# For cuda deployment (default) wget https://raw.githubusercontent.com/hv0905/NekoImageGallery/master/docker-compose.yml # For CPU-only deployment wget https://raw.githubusercontent.com/hv0905/NekoImageGallery/master/docker-compose-cpu.yml && mv docker-compose-cpu.yml docker-compose.yml - Modify the docker-compose.yml file as needed

- Run the following command to start the server:

# start in foreground docker compose up # start in background(detached mode) docker compose up -d

📚 API Documentation

The API documentation is provided by FastAPI’s built-in Swagger UI. You can access the API documentation by visiting

the /docs or /redoc path of the server.

⚡ Related Project

Those project works with NekoImageGallery :D

📊 Repository Summary

♥ Contributing

There are many ways to contribute to the project: logging bugs, submitting pull requests, reporting issues, and creating suggestions.

Even if you with push access on the repository, you should create a personal feature branches when you need them. This keeps the main repository clean and your workflow cruft out of sight.

We’re also interested in your feedback on the future of this project. You can submit a suggestion or feature request through the issue tracker. To make this process more effective, we’re asking that these include more information to help define them more clearly.

Copyright

Copyright 2023 EdgeNeko

Licensed under GPLv3 license.

)

)

)